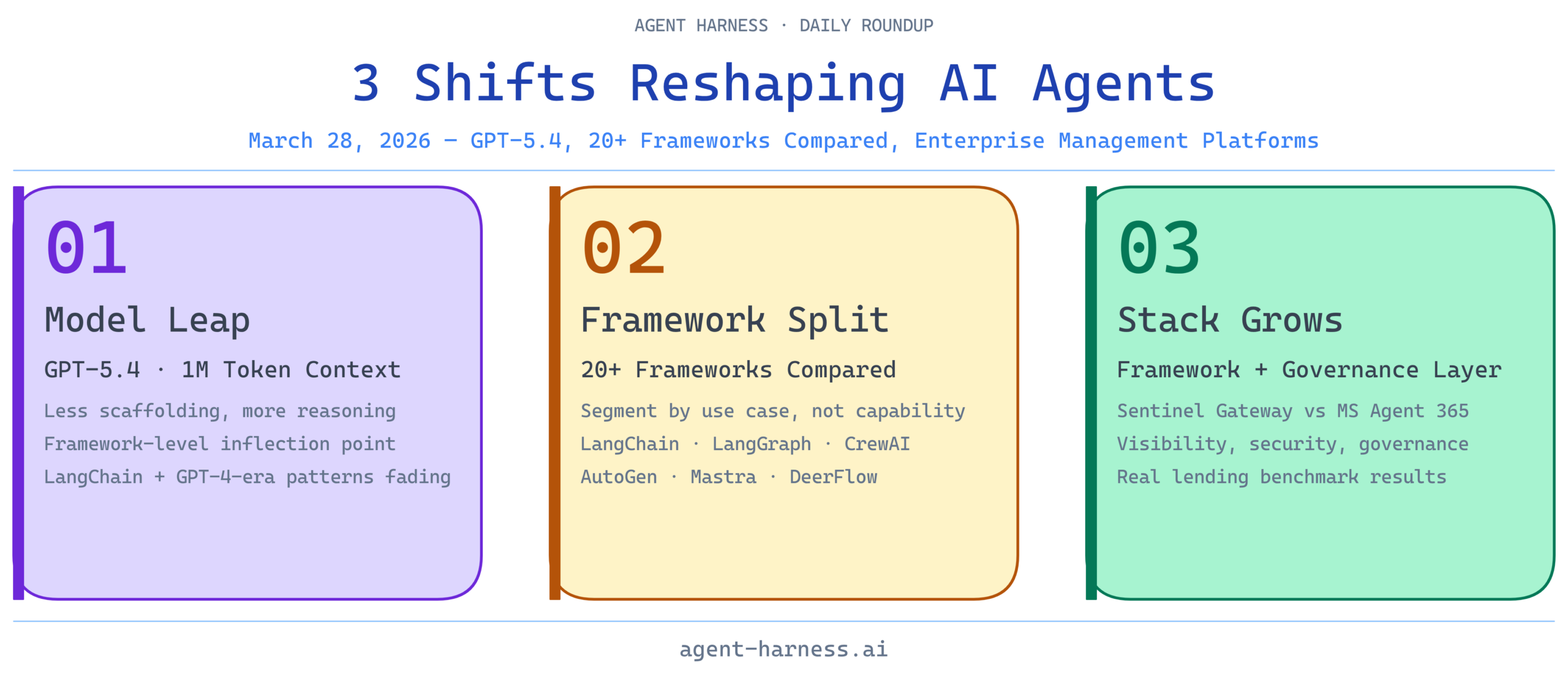

The AI agent ecosystem continues to accelerate this week with major model releases, comprehensive framework comparisons, and real-world performance benchmarks emerging across the industry. GPT-5.4’s arrival marks a significant inflection point for agentic AI capabilities, while the ongoing framework consolidation is forcing developers to think critically about which orchestration layer fits their use case. Below, we’ve tracked the week’s most relevant developments for anyone building with or evaluating agent frameworks.

1. LangChain Maintains Industry Momentum

LangChain’s continued prominence in agent engineering underscores its staying power as the default choice for developers building agentic systems. The framework’s extensibility and integration ecosystem make it the foundation layer that other tools build upon. As the baseline that most other frameworks compare themselves against, LangChain’s evolution directly shapes how the entire agent orchestration landscape develops.

Analysis: LangChain’s dominance isn’t about having the best primitives—it’s about ecosystem gravity. Every new agent framework we evaluate either integrates with LangChain or explicitly positions itself as an alternative. For teams starting with agents, LangChain remains the pragmatic choice, though increasingly it’s being combined with specialized tools like LangGraph for agentic workflows. The real question isn’t whether to use LangChain, but which layers to add on top of it.

2. GPT-5.4 Benchmarks: New King of Agentic AI

OpenAI’s GPT-5.4 represents a meaningful leap in model-level agentic capabilities, with performance gains that directly impact how frameworks need to be designed. The expanded reasoning window and improved instruction-following make models more reliable as agent cores. This isn’t just about raw benchmark numbers—it’s about reducing the scaffolding and error handling that frameworks currently need to build around weaker models.

Analysis: From a framework perspective, GPT-5.4’s improved reliability changes the calculus of agent design. Frameworks can now rely more on model intelligence and less on procedural guardrails. This potentially simplifies tool-calling chains and reduces the need for fallback mechanisms. However, this also means frameworks need to evolve quickly to take advantage of these capabilities—the window where GPT-4-era agent patterns remain optimal is closing.

3. 5 Crazy AI Updates This Week

The broader AI landscape this week confirms that agentic AI is moving from experimental to production-grade infrastructure. Beyond GPT-5.4, we’re seeing a wave of supporting infrastructure improvements that make it easier to build reliable agents at scale. These incremental updates across the ecosystem create compounding advantages for frameworks that integrate them early.

Analysis: Individual feature releases matter less than the trend they represent: the industry is collectively investing in making agents work reliably in production. This is the environment where frameworks like LangGraph and AutoGen thrive—they’re optimizing for the complexity that emerges when you move from proof-of-concept to real workloads. For framework evaluators, pay attention to which tools are first to integrate these new capabilities.

4. OpenAI Drops GPT-5.4 – 1 Million Tokens + Pro Mode

The 1 million token context window is the headline, but the real story is GPT-5.4’s improved performance on agentic tasks within those tokens. Pro mode adds another layer of capability targeting advanced reasoning. For agent builders, this means you can now load entire workflows, API schemas, and conversation histories into a single context without token management gymnastics.

Analysis: This is a framework-level inflection point. Many agent orchestration patterns exist because we need to work around token limits. With 1 million tokens, the constraint shifts from context size to prompt engineering and response latency. Frameworks need to evolve from “token-aware” designs to “reasoning-aware” designs. Teams building with older frameworks should stress-test how their abstractions hold up when context windows are no longer the limiting factor.

5. Sentinel Gateway vs MS Agent 365: AI Agent Management Platform Comparison

The emergence of dedicated agent management platforms signals that the raw framework layer is becoming commoditized—the new competitive differentiation is in orchestration, governance, and observability. Sentinel Gateway and MS Agent 365 represent two different philosophies: specialized tooling versus integrated enterprise platforms. The discussion highlights what buyers actually care about: security, operational visibility, and deployment flexibility.

Analysis: This conversation reveals a gap between what framework developers optimize for and what enterprises need. LangChain and AutoGen excel at developer experience; Sentinel and Agent 365 excel at operational control. Smart teams are increasingly adopting both—using an open-source framework as the foundation and wrapping it with an enterprise management layer. The framework question is no longer “which is best?” but “which is best for my layer of the stack?”

6. Comprehensive Comparison of Every AI Agent Framework in 2026

A comprehensive comparison across 20+ frameworks is exactly the kind of resource the industry needs right now. The sheer number of options reflects both the nascency of the space and the fact that different use cases genuinely benefit from different architectural approaches. LangChain, LangGraph, CrewAI, AutoGen, Mastra, and DeerFlow each optimize for different developer personas and problem domains.

Analysis: This is the meta-trend: the framework market is segmenting by use case rather than competing on general-purpose capability. You’re no longer choosing “the best” framework—you’re choosing the right tool for your specific problem. Multi-agent systems need AutoGen or CrewAI. Data-heavy agentic workflows benefit from CrewAI’s role-based architecture. Single-agent reasoning tasks might not need a framework at all. The comprehensive comparison is useful specifically because it helps you understand what each tool is optimized for, not which one is objectively superior.

7. The Rise of the Deep Agent: What’s Inside Your Coding Agent

The distinction between simple LLM workflows and deep agents is crystallizing. A coding agent isn’t just a model with file access—it’s a system that understands context, can reason about trade-offs, and builds mental models of codebases. This depth is what separates experimental code-writing tools from production-grade autonomous systems. The frameworks supporting this depth—LangGraph, AutoGen with code execution—are where the sophistication lives.

Analysis: “Deep agent” is becoming the operative term for systems that go beyond prompt-and-respond. For framework evaluation, ask: does this framework make it easy to build agents with persistent context, error recovery, and multi-step planning? LangGraph excels here with its state management primitives. AutoGen with code execution environments enables the kind of stateful, reasoning-heavy agents discussed in this video. Simpler frameworks like basic LangChain chains are increasingly inadequate for this class of problem.

8. Benchmarked AI Agents on Real Lending Workflows

Real-world benchmarking data is the antidote to marketing claims. A case study evaluating agents against actual lending workflows—with real APIs, regulatory constraints, and data volume—provides insights that synthetic benchmarks simply can’t. This is the kind of grounded, practical validation that helps teams understand which frameworks actually perform in production contexts they care about.

Analysis: Financial services benchmarking is particularly revealing because it has high stakes, measurable outcomes, and genuine operational constraints. If you’re building agents in regulated industries, these real-world benchmarks matter more than benchmark suites. The implicit question here is: which frameworks handle stateful, multi-step financial workflows with the reliability required for production lending systems? This case study helps answer that by showing performance on actual loan processing pipelines rather than toy examples.

The Week in Framework Context

Three themes emerge from this week’s developments:

First, model capability is rising faster than framework sophistication. GPT-5.4 and its expanded context window are changing what’s possible at the model layer faster than frameworks are adapting. This creates both risk and opportunity—teams using frameworks optimized for older models may find themselves with unnecessary complexity, while teams building custom solutions face pressure to formalize their patterns into reusable frameworks.

Second, the framework market is segmenting by use case rather than consolidating around a single winner. The comprehensive framework comparison captures this perfectly. LangChain remains foundational, but LangGraph for state management, CrewAI for multi-agent orchestration, AutoGen for complex reasoning, and specialized platforms like Sentinel for enterprise governance are all thriving. The “best” framework depends entirely on your problem.

Third, production-grade agent systems require more than a framework. They need management platforms for governance, benchmarking data for validation, and architectural patterns proven in real-world contexts. Sentinel vs. MS Agent 365, the lending benchmarks, and the deep agent discussion all point to a maturing industry where framework choice is necessary but insufficient. You need orchestration, visibility, and validation layers on top.

For practitioners: If you’re evaluating frameworks this week, test your evaluation against specific use cases rather than general-purpose benchmarks. Use the GPT-5.4 benchmarks to understand what’s possible at the model layer, then assess how much additional scaffolding your framework of choice requires. If you’re already committed to a framework, focus on which management and governance layers make the most sense for your operational context.

The agent framework space remains genuinely unsettled, but it’s settling around clear patterns. That’s progress.