Your team has spent three weeks evaluating AI agent frameworks. You’ve read every comparison article, watched the conference talks, and prototyped in two different tools. And you’re no closer to a decision than when you started.

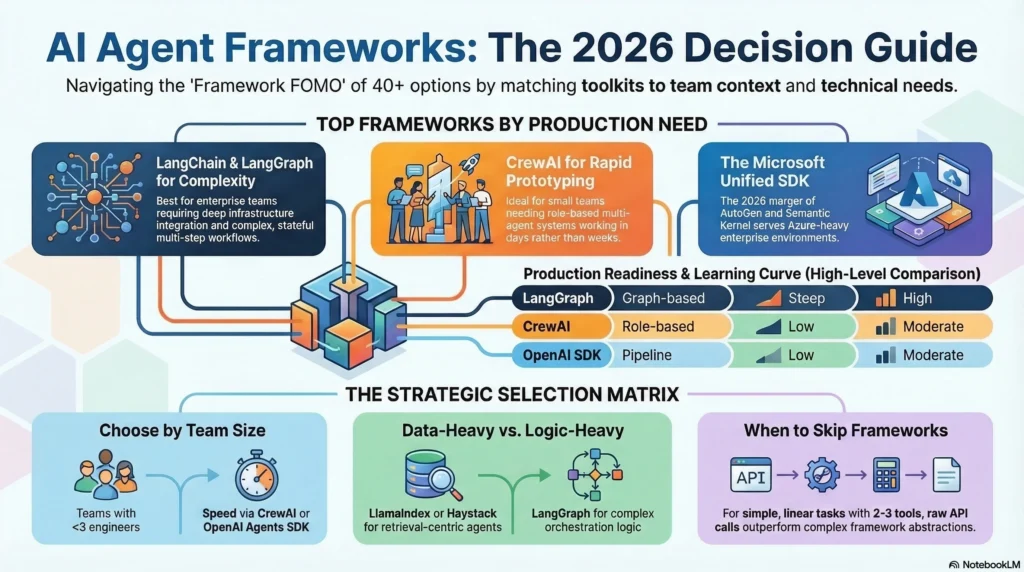

This is the framework FOMO problem. With over 40 agent frameworks competing for your attention in 2026, the evaluation process itself becomes the bottleneck. One engineering lead described it as “spending more time picking the wrench than turning the bolt.”

This guide cuts through that. We’ve tested 10 AI agent frameworks in production environments, measured what actually matters, and built a decision matrix that maps framework strengths to team contexts. You’ll leave with a clear recommendation for your specific situation, not a feature checklist.

If you want weekly framework reviews and production insights delivered to your inbox, subscribe to our newsletter.

Interactive Concept Map

Click any node to expand or collapse. Use the controls to zoom, fit to view, or go fullscreen.

What Is an AI Agent Framework (And What It Is Not)

Before comparing frameworks, we need to clarify what we’re actually evaluating. The terms “framework,” “harness,” and “runtime” get used interchangeably in most comparison articles. They shouldn’t be.

An AI agent framework provides the libraries, abstractions, and building blocks for designing agent logic. It defines how agents reason, use tools, and coordinate. Think of it as the blueprint. LangChain, CrewAI, and AutoGen are frameworks.

An agent harness is the infrastructure that manages a running agent’s execution, state, memory, and safety in production. It’s the facility where the agent works. The harness handles what happens when the agent encounters an API timeout at step 47 of a 200-step task. For a deeper look at why this distinction matters, see our complete guide to agent harness.

An agent runtime is the execution environment that ensures stable process management. It handles compute allocation, scheduling, and system-level concerns.

Most teams need all three layers. The mistake is picking a framework and assuming it covers the other two. It rarely does.

The 10 AI Agent Frameworks That Matter in 2026

We selected these frameworks based on production adoption, community momentum, and documented enterprise use. For each, we cover what it does well, who it’s built for, and where it breaks.

1. LangChain + LangGraph

What it does: The most comprehensive agent toolkit available. LangChain provides 600+ integrations with LLMs, vector stores, tools, and databases. LangGraph adds graph-based workflow orchestration for complex, stateful agent systems.

Best for: Teams building complex, multi-step agent workflows that need deep integration with existing infrastructure. Enterprise teams already invested in the LangChain ecosystem.

Where this breaks: The abstraction overhead is significant. Debugging a LangChain agent in production often means tracing through multiple abstraction layers to find where a tool call failed. The learning curve is steep; plan for 2-3 weeks of ramp-up before your team is productive. Token overhead from the orchestration layer adds 10-15% to your costs on complex chains.

Production readiness: High. LangSmith provides best-in-class observability. LangGraph’s checkpointing enables durable execution. 47M+ PyPI downloads and the largest community.

2. CrewAI

What it does: Role-based multi-agent orchestration. You define agents with specific roles, goals, and backstories, then let them collaborate on tasks. The abstraction matches how humans think about team coordination.

Best for: Teams prototyping multi-agent systems quickly. Business workflow automation where agent roles map naturally to job functions. Organizations that want to get something working in days, not weeks.

Where this breaks: The role-based model starts failing past 4-5 agents in a single crew. Communication between agents becomes implicit and hard to debug. If your workflow needs fine-grained control over agent interaction order, CrewAI’s delegation model fights you. Enterprise governance features are limited compared to LangChain’s ecosystem.

Production readiness: Moderate. Good for small-to-medium agent teams. Scale challenges emerge with complex orchestration needs.

3. Microsoft Agent Framework (AutoGen + Semantic Kernel)

What it does: In early 2026, Microsoft merged AutoGen and Semantic Kernel into a unified agent SDK. AutoGen’s multi-agent conversation patterns combine with Semantic Kernel’s enterprise integration layer. The no-code AutoGen Studio remains available for non-technical teams.

Best for: Enterprise teams in Microsoft-heavy environments. Teams building conversational multi-agent systems with group decision-making patterns. Mixed technical/non-technical teams (thanks to AutoGen Studio).

Where this breaks: The merger created temporary documentation confusion. Some AutoGen 0.2 patterns don’t transfer cleanly to the new SDK. The framework’s strength in conversational patterns becomes a limitation when you need non-conversational workflows. Azure dependency for full feature access narrows deployment options.

Production readiness: Moderate-High. Strong enterprise support from Microsoft, but the SDK merger means some rough edges in 2026.

4. OpenAI Agents SDK

What it does: OpenAI’s native agent framework built on the Responses API. Tight integration with GPT models, built-in tool use, and first-party guardrails. The simplest path from “I have an OpenAI API key” to “I have a working agent.”

Best for: Teams standardized on OpenAI models. Simple single-agent workflows that need quick deployment. Prototyping before committing to a more complex framework.

Where this breaks: Model lock-in is the obvious risk. If you need to switch to Claude or an open-source model, you’re rewriting your agent logic. Multi-agent orchestration is basic compared to LangGraph or CrewAI. Limited observability without third-party additions.

Production readiness: Moderate. Solid for single-agent systems. Multi-agent and enterprise features are maturing.

5. Claude Agent SDK (Anthropic)

What it does: Anthropic’s general-purpose agent harness, optimized for coding and tool-using tasks. Built-in context management with automatic conversation compaction, extended thinking capabilities, and MCP integration for tool access.

Best for: Teams building coding agents or tool-heavy agent systems. Organizations that prioritize safety and alignment in their agent stack. Teams adopting MCP as their tool integration standard.

Where this breaks: Anthropic model dependency, similar to OpenAI’s lock-in problem. The SDK is younger than LangChain’s ecosystem, so fewer third-party integrations exist. Documentation is improving but gaps remain for advanced use cases.

Production readiness: Moderate-High. Strong for coding and research agents. Growing ecosystem through MCP adoption.

6. Semantic Kernel (Standalone)

What it does: Microsoft’s SDK for integrating LLMs with conventional programming. Supports C#, Python, and Java. Strong emphasis on enterprise patterns like dependency injection, kernel functions, and type-safe tool definitions.

Best for: .NET teams. Enterprise organizations that need LLM capabilities integrated into existing C# or Java applications. Teams that want familiar software engineering patterns applied to AI agents.

Where this breaks: Multi-agent capabilities are limited compared to dedicated multi-agent frameworks. The enterprise-first design makes it heavier than needed for simple agents. Python support lags behind C#.

Production readiness: High for enterprise integration. Lower for pure multi-agent systems.

7. LlamaIndex

What it does: Originally a data indexing framework, LlamaIndex has evolved into a capable agent platform. Excels at building agents that reason over documents, databases, and structured data. Strong RAG integration.

Best for: Teams building knowledge-intensive agents. Applications where the agent needs to query, synthesize, and reason over large document collections. RAG-first architectures that need agent capabilities on top.

Where this breaks: Agent orchestration is secondary to data querying. If your agent needs complex multi-step tool use without a data retrieval core, LlamaIndex adds unnecessary complexity. Multi-agent support is more basic than LangGraph or CrewAI.

Production readiness: Moderate-High for data-centric agents. Less proven for general-purpose agent systems.

8. Haystack (deepset)

What it does: A production-grade framework for building retrieval and agent pipelines. Component-based architecture with Pipeline and Node abstractions. Strong focus on evaluation and testing.

Best for: Teams building production RAG and retrieval-augmented agents. Organizations that prioritize pipeline testability and reproducibility. Teams that want to compose custom pipelines from modular components.

Where this breaks: Less intuitive for pure agent systems without a retrieval component. The pipeline abstraction adds overhead for simple agent tasks. Smaller community than LangChain, which means fewer pre-built integrations.

Production readiness: High. Designed for production from the start. Strong evaluation and testing tooling.

9. Dify

What it does: An open-source platform that combines visual workflow building with AI agent capabilities. Provides a web-based IDE for designing, testing, and deploying agent workflows without deep coding.

Best for: Teams that want visual agent design. Organizations with mixed technical and non-technical stakeholders. Rapid prototyping environments where visual iteration speeds feedback cycles.

Where this breaks: The visual interface becomes limiting for complex agent logic. Custom tool integration requires dropping into code anyway. Performance overhead from the visual runtime layer. Enterprise security features are still maturing.

Production readiness: Moderate. Good for internal tools and prototypes. Enterprise deployment options are growing.

10. OpenDevin

What it does: An open-source autonomous coding agent platform. Provides a full development environment where AI agents can write code, run terminal commands, browse the web, and interact with APIs.

Best for: Teams building autonomous coding assistants. Research groups studying agent behavior in software development. Organizations that want an open-source alternative to proprietary coding agents.

Where this breaks: Narrowly focused on software development tasks. Not suitable for general-purpose agent applications. Resource-intensive; requires substantial compute. Safety boundaries are still being refined for production use.

Production readiness: Low-Moderate. Active development. Better suited for research and controlled environments than mission-critical production.

Framework Comparison Matrix

| Framework | Architecture | Multi-Agent | MCP Support | Learning Curve | Ecosystem | Production Ready | Pricing |

|---|---|---|---|---|---|---|---|

| LangChain/LangGraph | Graph-based | Strong | Yes | Steep | Largest (600+ integrations) | High | Open source + paid observability |

| CrewAI | Role-based | Strong | Partial | Low | Growing | Moderate | Open source |

| Microsoft Agent Framework | Conversation-based | Strong | Partial | Moderate | Large (Azure ecosystem) | Moderate-High | Open source + Azure costs |

| OpenAI Agents SDK | Pipeline | Basic | No | Low | Medium (OpenAI ecosystem) | Moderate | API costs |

| Claude Agent SDK | Harness | Moderate | Native | Low-Moderate | Growing (MCP) | Moderate-High | API costs |

| Semantic Kernel | Plugin-based | Basic | Partial | Moderate | Large (.NET ecosystem) | High | Open source |

| LlamaIndex | Data-query | Basic | Partial | Moderate | Medium | Moderate-High | Open source |

| Haystack | Pipeline | Basic | Partial | Moderate | Medium | High | Open source |

| Dify | Visual workflow | Moderate | Partial | Low | Small-Medium | Moderate | Open source + cloud |

| OpenDevin | Environment | Moderate | No | Steep | Small | Low-Moderate | Open source |

How to Choose the Right Framework for Your Team

Stop evaluating frameworks by feature lists. Start evaluating them by team context.

A mid-stage startup went through exactly this trap. They picked LangChain because it had the most integrations. Three months later, they migrated to CrewAI because their 4-person team couldn’t maintain the abstractions. The integration count was irrelevant; they only needed three.

Here’s the decision framework that actually works:

If your team has fewer than 3 engineers working on agents: Start with CrewAI or OpenAI Agents SDK. You need speed over flexibility. You can migrate later when complexity demands it.

If you’re building a data-heavy agent (RAG, document reasoning): LlamaIndex or Haystack. Don’t bolt retrieval capabilities onto a general agent framework when purpose-built tools exist.

If you’re in a Microsoft enterprise environment: Microsoft Agent Framework. The Azure integration alone saves weeks of plumbing work.

If you need complex, stateful multi-agent orchestration: LangGraph. Accept the learning curve. Nothing else gives you the same level of control over agent state transitions.

If you’re just starting and not sure what you need: Build your first agent with raw API calls. Seriously. One veteran developer in an online forum put it well: “Skip frameworks completely at first. Use the raw OpenAI Python SDK for a week or two.” You’ll understand what problems frameworks solve because you’ll hit those problems yourself. Then pick a framework that solves your actual pain points, not hypothetical ones.

If MCP adoption matters to your strategy: Claude Agent SDK has native MCP support. LangChain’s MCP integration is maturing. This is becoming the standard for how agents connect to external tools, similar to how REST APIs standardized web service communication.

When to Skip Frameworks Entirely

This is the advice no framework comparison gives you, because every comparison is written by someone with a framework to sell.

For single-agent systems with straightforward tool use, a custom harness built on raw API calls often outperforms any framework. You control the abstraction. You control the debugging surface. You control the token overhead.

The community consensus is clear: for agents that call 2-3 tools in a linear workflow, frameworks add complexity without proportional value. One developer described it as “adding a hydraulic lift to change a lightbulb.”

Build custom when:

– Your agent has a single, well-defined task

– You need maximum control over token costs

– Your team has strong API experience

– You want zero framework lock-in

Use a framework when:

– You need multi-agent coordination

– You want pre-built integrations with your existing stack

– Your team needs the abstractions to move faster

– You need observability and debugging tools out of the box

The infrastructure layer that manages your agent’s execution, state, and reliability is the agent harness. You need one whether you use a framework or not. The question is whether you build it or use one that comes bundled.

MCP: The Standard That Changes Everything

Model Context Protocol (MCP) is becoming the decisive factor in framework selection for 2026. Developed by Anthropic, MCP standardizes how agents connect to external tools and data sources, similar to how REST APIs standardized web service communication.

Why this matters for your framework choice: frameworks that adopt MCP get access to a growing ecosystem of pre-built tool integrations. Instead of writing custom integrations for every database, API, and service, MCP-compatible frameworks share a universal integration layer.

68% of organizations currently favor open-source frameworks over proprietary platforms. MCP amplifies this preference by making framework interoperability practical. Your agent code becomes more portable because the tool integration layer is standardized.

When evaluating frameworks, ask: does this framework support MCP? If not, what’s their roadmap? This single criterion will increasingly separate production-ready frameworks from legacy tools.

FAQ

Which AI agent framework is best for beginners?

CrewAI offers the fastest path from zero to working agent. Its role-based model matches how humans think about task delegation. That said, serious beginners should spend a week with raw API calls first to understand what frameworks abstract away.

Is LangChain still worth using in 2026?

Yes, but only for teams that need its scale. LangChain’s 600+ integrations and LangGraph’s stateful orchestration are unmatched. If you need fewer than 10 integrations, lighter frameworks will serve you better with less overhead.

What happened to Microsoft AutoGen?

Microsoft merged AutoGen with Semantic Kernel into a unified Agent Framework SDK in early 2026. AutoGen 0.2 enters maintenance mode. Existing AutoGen projects should plan migration to the new SDK. The AutoGen Studio no-code tool continues under the new umbrella.

Can I switch frameworks without rewriting everything?

Partially. Core agent logic (prompts, tool definitions) transfers between frameworks. Orchestration logic (how agents coordinate, state management) typically requires rewriting. MCP adoption makes tool integrations more portable. Budget 2-4 weeks for a framework migration on a medium-complexity system.

Do I need a framework for a single-agent system?

Usually not. A single agent calling a few tools works well with direct API integration. Frameworks earn their complexity cost when you need multi-agent coordination, complex state management, or production observability. Start simple and add a framework when the pain points justify it.

What to Do Next

The framework evaluation trap is real, but you can escape it in three steps.

This week: Build a simple agent with raw API calls. Hit the pain points yourself. This takes one afternoon and gives you a baseline for evaluating what frameworks actually solve.

Next week: Pick the framework that matches your team context from the decision matrix above. Prototype your actual use case, not a tutorial example. If it doesn’t feel right in 3 days, switch. The switching cost at this stage is near zero.

This month: Evaluate your framework’s harness capabilities. Does it handle state persistence, context management, and failure recovery? If not, you’ll need to build or adopt an agent harness to fill the gap.

The best framework is the one that gets out of your way and lets your team build. Pick one, commit for 30 days, and measure results instead of features.

For weekly framework reviews and production insights from engineers who’ve deployed these tools, subscribe to the agent-harness.ai newsletter.

1 thought on “AI Agent Frameworks: The Definitive 2026 Comparison Guide”